Introduction

The modern healthcare landscape is characterized by an ever-increasing volume of data and clinical complexity, rendering reliance on human cognition alone insufficient for ensuring patient safety [1]. Clinical Decision Support Systems (CDSS) have emerged as a cornerstone of health informatics, designed to augment clinical judgment by providing filtered, patient-specific information at the point of care [2]. This support is nowhere more critical than in Obstetrics, a specialty defined by time-sensitive decisions that carry profound implications for both maternal and fetal outcomes [3,4]. Conditions such as fetal distress, pre-eclampsia, and postpartum hemorrhage demand rapid and accurate interventions, making the field a prime candidate for technological augmentation.

The current state of the art has unequivocally demonstrated the technical feasibility and potential of CDSS in Obstetrics. Sophisticated machine learning models can now predict pre-eclampsia risk with high accuracy in the first trimester [5,6], and well-established rule-based systems effectively guide the assessment of various conditions. However, a significant disconnect persists between this technical potential and the realized impact of CDSS on error reduction in clinical practice, which remains inconsistent at best [7,8].

The central problem, and the focus of this paper, is that the pursuit of algorithmic precision has often overshadowed the critical challenges of implementation science [9,10]. A technically flawless system, defined as one that demonstrates high performance in metrics such as accuracy, F1 score, and recall in controlled testing environments [11], is rendered ineffective if clinicians, suffering from alert fatigue, ignore its recommendations, or if the system disrupts the time-sensitive workflows of a busy labour ward [12-14]. This paper critically examines the literature to address what is missing in the current state of the art. We argue that the next frontier in CDSS research must shift from what the system can do to how the system is used—how it integrates into the complex socio-technical environment of Obstetrics. This requires a deeper understanding of the human, organizational, and cognitive barriers that currently prevent CDSS from achieving its full potential [3,10].

Methods

Literature Compilation Strategy:

This review employed a systematic, targeted search strategy to compile a comprehensive body of literature on CDSS in Obs&Gyn and the associated field of implementation science. The objective was to identify not only evidence of technical efficacy but also the critical barriers to real-world adoption and impact.

Search Strategy

The search was conducted across major academic databases, including PubMed, Scopus, and Google Scholar, using a combination of Medical Subject Headings (MeSH) and free-text terms. Key search strings were formulated to capture the core themes of the review:

- "Clinical Decision Support Systems" AND ("Obstetrics" OR "Gynaecology")

- "CDSS" AND "Error Reduction" AND "Maternity"

- "Alert Fatigue" AND "CDSS" AND "Implementation"

- "Automation Bias" AND "Clinical Decision Support"

- "Human-Centered Design" AND "Healthcare IT"

Inclusion and exclusion criteria:

The review prioritized high-quality, recent evidence to ensure currency and relevance.

Inclusion criteria were:

- Systematic reviews, meta-analyses, and randomized controlled trials (RCTs) published between 2020 and 2025.

- Studies reporting on the real-world implementation and evaluation of CDSS in clinical settings.

- Papers specifically addressing the impact of CDSS on diagnostic/management errors, clinician behavior, or organizational factors.

Studies were excluded if they were purely theoretical, focused solely on basic Electronic Health Record (EHR) features without a decision support component, or were published in non-peer-reviewed venues.

Compilation and synthesis

The initial search yielded over 300 relevant titles. After a rigorous screening of abstracts, 55 core papers were selected for full-text review and synthesis. The findings were then thematically categorized according to the structure of the literature review Technical Efficacy, Human and Cognitive Factors, and Organizational Challenges to build a coherent narrative and analytical framework.

Results and discussion

Technical Efficacy and Applications

Systematic reviews and meta-analyses have firmly established the baseline efficacy of CDSS in maternity care, confirming that these systems can improve adherence to clinical guidelines and enhance patient outcomes [7,15]. The application of this technology in Obstetrics is broad, with several key areas of success. In risk prediction, machine learning algorithms are increasingly used to move beyond static guidelines toward dynamic, personalized risk assessments for conditions like preeclampsia and intrauterine growth restriction [16-19]. Recent systematic reviews highlight the rise of AI-augmented CDSS, which leverage complex datasets to improve predictive accuracy for a range of pregnancy-related complications [4,20].

In diagnostics, computerized fetal heart rate analysis systems have demonstrated an ability to reduce inter-observer variability, a critical factor in intrapartum care. However, these systems often exemplify the trade-off between sensitivity and specificity, frequently suffering from high false-positive rates that can lead to unnecessary interventions [21]. This highlights a persistent challenge: while technical accuracy is high in controlled settings, the clinical utility is often moderated by the system’s potential to create new problems, such as over-investigation.

Human factors and cognitive load

A substantial body of literature, drawing from health informatics and human factors engineering, identifies the clinician-system interface as a primary source of implementation failure [2,10]. The most frequently cited non-technical barriers include:

- Alert Fatigue: Defined as the desensitization to safety alerts, leading to clinicians ignoring or overriding them, alert fatigue is a pervasive issue stemming from an excessive number of non-critical or poorly timed alerts [12,22]. This problem is particularly acute in high-stress, data-rich environments like the labour ward, where the cognitive burden is already high [13,14,23]. For example, a continuous fetal heart rate monitor may trigger frequent alarms for minor decelerations that are clinically insignificant, leading staff to become desensitized to the alarm’s sound and potentially miss a true critical event. Similarly, a CDSS might repeatedly flag a patient for a low-risk condition that has already been acknowledged by the clinical team, adding to the noise without providing new, actionable information. Recent research emphasizes the need for mitigation strategies such as tiered, context-aware alerting to ensure that only the most critical information is presented intrusively [24,25].

- Automation Bias and Trust: A significant cognitive barrier is automation bias, the tendency for clinicians to over-rely on a system’s recommendation, leading to a failure to apply independent clinical judgment and potentially causing errors of omission [9,26]. This is compounded by the “black box” nature of some AI models, which can erode trust and lead to either blind acceptance or outright rejection of the system’s output [27,28]. To determine the predictive validity of a machine learning model, it is essential to evaluate its performance using metrics such as **accuracy** (the proportion of true results), **F1 score** (a harmonic mean of precision and recall), and **recall** (the ability of the model to find all the relevant cases within a dataset). Establishing and maintaining clinician trust is therefore a critical factor for adoption, requiring transparent, explainable AI (XAI) and robust validation [29,30].

- Workflow Integration and Usability: CDSS that are not seamlessly integrated into clinical workflows are consistently met with resistance and abandonment [13,31]. Systems that increase workload, demand excessive data entry, or disrupt established communication patterns are unlikely to be adopted, regardless of their technical merit [10]. This underscores the importance of a human-centered design approach, where the system is designed around the user’s needs and existing processes, rather than forcing the user to adapt to the system [10,32]. Usability evaluations must therefore extend beyond simple satisfaction surveys to encompass a holistic assessment of the system’s fit within the broader clinical ecosystem [33].

Organizational and governance challenges

The final theme explores the systemic issues that inhibit the successful and sustainable implementation of CDSS. Effective adoption is not a one-time technical project but a continuous process of organizational change that requires a robust governance framework [9,20]. A critical challenge is the lack of interoperability between different health IT systems, which requires not only technical compatibility but also regulations, restrictions, and audits by higher authorities. The use of standards like HL7 FHIR (Fast Healthcare Interoperability Resources) is essential for creating a data-driven environment where CDSS can access the necessary real-time information, yet implementation remains inconsistent [34].

Furthermore, the rise of AI-driven CDSS introduces complex ethical and legal ambiguities concerning accountability, algorithmic bias, and data privacy [10,35]. Healthcare organizations must establish clear governance structures to manage these risks, ensuring that systems are validated for fairness and equity across different patient populations [9]. Finally, successful implementation requires dedicated resources for ongoing maintenance, validation, and updating of the CDSS knowledge base—a crucial but often neglected aspect of the system lifecycle [9,10]. Frameworks such as the Non-adoption, Abandonment, and challenges to Scale-up, Spread, and Sustainability (NASSS) framework have been proposed to help organizations navigate this complex landscape by systematically assessing challenges across multiple domains, including the condition, technology, value proposition, adopters, organization, and wider system [10].

Proposed Human-Centered CDSS Model

The primary limitation identified in the literature is the high rate of alert fatigue, which is largely a product of systems that are clinically accurate but contextually ignorant [12,24]. Current CDSS often rely on static, rule-based triggers that are designed by human experts but may fail to account for the dynamic nature of clinical work. To address this, we propose a conceptual Context-Aware, Adaptive Alerting (CAAA) CDSS model. This model, which is not yet in clinical use, improves upon existing methods by dynamically adjusting alert severity and delivery based on both the patient’s real-time clinical trajectory and the clinician’s estimated cognitive load.

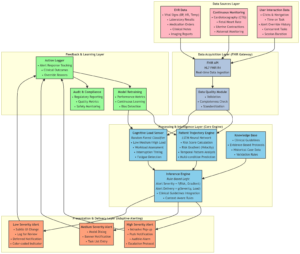

System Architecture and Data Flow

The CAAA system is designed as a modular, three-tier architecture that integrates with the hospital’s EHR via a Fast Healthcare Interoperability Resources (FHIR) API gateway, ensuring interoperability [34]. The proposed architecture is illustrated below:

- Data Acquisition Layer (FHIR Gateway)

This layer ingests two parallel data streams:

- Patient Data: Real-time data from the EHR, including vital signs (i.e. blood pressure), laboratory results, medication orders, and continuous monitoring data (i.e. cardiotocography traces). Data quality, which encompasses the collection, storage, cleansing, and curation of data, is assessed using standardized tools to ensure reliability [36].

- Clinician Activity Data: Logs of user interaction with the EHR, such as time spent on specific tasks, number of open patient records, and recent alert override history. This data serves as a proxy for cognitive load.

- Processing and Intelligence Layer (The Core Engine)

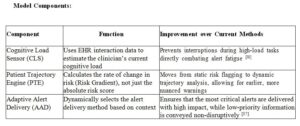

This layer houses the three main intelligence modules:

- Patient Trajectory Engine (PTE): A machine learning model (i.e. a Long Short-Term Memory Network or LSTM) trained on historical patient data to predict not just the risk of a critical event (i.e. pre-eclampsia, fetal distress) but also its imminence and velocity. It outputs a dynamic Risk Score and a Risk Gradient.

- Cognitive Load Sensor (CLS): A classification model (i.e. Random Forest) that processes real-time clinician activity logs to categorize the user’s state as Low Load, Medium Load, or High Load. This serves as the primary mechanism for mitigating alert fatigue by preventing interruptions during critical tasks [25].

- Inference Engine: This rule-based module synthesizes the outputs of the PTE and CLS with pre-defined clinical guidelines. Its core logic is: Alert Severity = f(Risk Score, Risk Gradient) and Alert Delivery = g(Alert Severity, Cognitive Load).

- Presentation and Delivery Layer (Adaptive Alerting):

This layer selects the optimal alert delivery channel and format based on the Inference Engine’s output:

- High Severity, Low/Medium Load: An immediate, intrusive alert within the EHR, coupled with a dedicated mobile push notification to ensure receipt.

- Low Severity, High Load: A suppressed or deferred alert, logged for later review, or a subtle, non-intrusive visual cue (i.e. a color change on the patient’s EHR banner). This avoids contributing to cognitive overload during critical moments [24].

This architecture ensures the system is not only clinically precise—meaning it meets high-performance indicators such as accuracy, F1 score, and recall as determined by medical experts and system designers—but also contextually aware, directly addressing the human factors that plague current CDSS implementations.

Hypothetical Implementation and Evaluation Plan

A phased approach would be essential for the successful development and deployment of the CAAA model:

- Phase 1: Human-Centered Design and Workflow Analysis: Conduct ethnographic studies and co-design workshops with Obs&Gyn clinicians to map user workflows, identify cognitive bottlenecks, and establish preferences for adaptive alert presentation [38].

- Phase 2: Model Development and Training: Collect de-identified historical EHR data to train and validate the PTE and CLS models. This phase would require close collaboration with data scientists and clinical informaticists to mitigate potential biases in the training data [23].

- Phase 3: Pilot Implementation and Evaluation: Integrate the CAAA model into a sandboxed EHR environment for a pilot study. Conduct a randomized controlled trial (RCT) comparing the CAAA system to a traditional, static CDSS. Primary outcomes would include Alert Override Rate and clinician satisfaction (measured via validated instruments like the System Usability Scale). Secondary outcomes would include Time to Intervention for true positive events and diagnostic accuracy [38].

Future Perspectives

Each machine learning model is only as valid as the data used to train and validate it. Therefore, it is crucial that data for both training and validation are used for final decision-making at the center where the algorithm is used. The governance and ethical aspects of the project are determined first by the authorities of the healthcare center and then by the ruling authorities of that city and country. While multi-site RCTs are the gold standard for validation, they are based on the standardization of each data point obtained for the task at hand. This requires meticulous checkpoints, limits, and flow-charts applicable to the management environment of the individual center, which must be aligned with the rest of the group. This may not always be possible in real-world scenarios.

It is also important to acknowledge the limitations and advantages of each machine learning model. For example, while deep learning models can achieve high accuracy, they are often considered “black boxes” due to their lack of interpretability. In contrast, simpler models like decision trees are more transparent but may not offer the same level of performance. A thorough understanding of these trade-offs is essential for selecting the appropriate model for a given clinical problem [37].

Conclusion

The process of compiling this review has reinforced the central thesis that the future success of Clinical Decision Support Systems (CDSS) in Obstetrics and Gynaecology hinges on a decisive pivot from purely technical innovation to a deep and sustained focus on implementation science. The initial aim of synthesizing the impact of CDSS quickly evolved into a more critical investigation of the barriers preventing that impact, a direction strongly dictated by the current body of literature. The evidence clearly indicates that while algorithms are powerful, they are not a panacea. The path to meaningful error reduction is paved with human-centered design, robust organizational strategy, and a nuanced understanding of clinical cognition.

Reflection on method

The primary limitation of this review methodology is its reliance on published literature, which may be subject to publication bias, often highlighting successful, well-funded implementations while underreporting failures or partial successes. A more comprehensive approach would integrate qualitative field studies, such as ethnography or direct observation within diverse Obs&Gyn units, to capture the tacit knowledge and unwritten workflow challenges that are seldom reported in quantitative studies [11]. Such research would provide invaluable, context-rich data to inform the design of the next generation of CDSS.

Author contributions

Mohamed Abdelrahman wrote the first draft. Prof. Hassan Rajab, Dr. Rawia Ahmed and Dr. Mohamed Elshaikh reviewed the paper before submission. All authors approved the final version.

Ethical approval

No ethical approval required for this review.